Do not create multi-accounts, you will be blocked!

Megalo Web Services - Screaming Frog SEO Spider v19.2

Featured Replies

Recently Browsing 0

- No registered users viewing this page.

Latest Updated Files

-

WhatsDesk – Smart WhatsApp Support Ticketing & Sales Automation Tool

.thumb.png.17f220c548e942797c67595c75ba662a.png)

- 3 Downloads

- 0 Comments

-

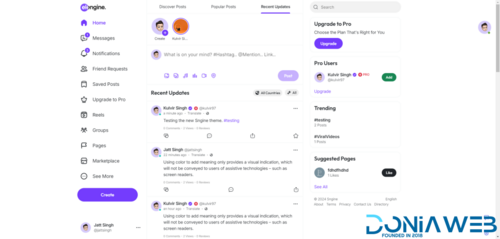

Xngine - The Ultimate Sngine Theme

- 878 Downloads

- 28 Comments

-

GROWX SMM 10IN1 THEMES

- 7 Downloads

- 0 Comments

-

Nova SMM Panel Script in USD

- 11 Downloads

- 0 Comments

-

Bicrypto - Crypto Trading Platform, Binary Trading, Investments, Blog, News & More!

- 34 Purchases

- 24 Comments

-

Bicrypto - Crypto Trading Platform, Binary Trading, Investments, Blog, News & More!

- 90 Purchases

- 115 Comments

-

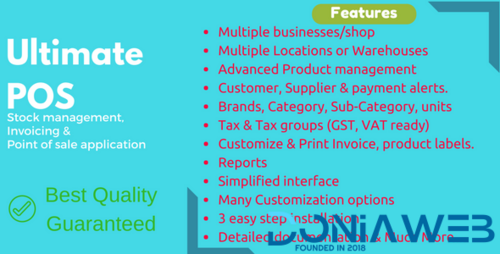

Ultimate POS - Best ERP, Stock Management, Point of Sale & Invoicing application + Addons

- 15,930 Downloads

- 57 Comments

-

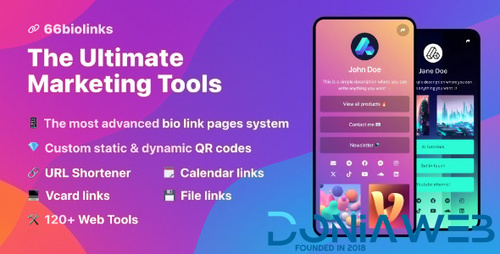

66biolinks - Bio Links, URL Shortener, QR Codes & Web Tools (SAAS) [Extended License]

- 56 Purchases

- 70 Comments

-

66socialproof - Social Proof & FOMO Widgets Notifications (SAAS) [Extended License]

.thumb.jpg.eee625ad373b163ec58239227d31fed4.jpg)

- 63 Purchases

- 38 Comments

-

WhatsML – AI-Based Marketing & Chat Automation & Bulk Sender Tools for WhatsApp (SaaS)

- 35 Downloads

- 6 Comments

-

All Marketplace - 33 Paid Premium Extensions + 10 Premium Themes | MagicAi

- 81 Purchases

- 754 Comments

-

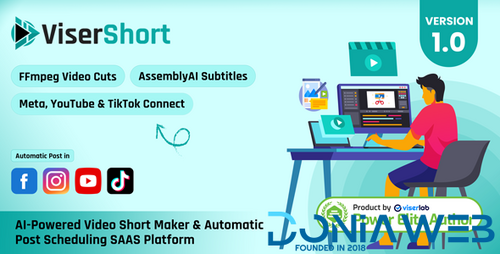

ViserShort - AI Powered Video Short Maker And Automatic Post Scheduling SAAS Platform

- 0 Purchases

- 0 Comments

-

YouLab - AI Powered YouTube Comment Response Automation SAAS Platform

- 0 Purchases

- 0 Comments

-

Elementra - 100% Elementor WordPress Theme

- 55 Downloads

- 1 Comments

-

Verdantia - Landscaping and Garden WordPress Theme

- 14 Downloads

- 0 Comments

-

The7 - Website and eCommerce Builder for WordPress

- 43 Downloads

- 0 Comments

-

Hoteller - Booking WordPress Theme

- 13 Downloads

- 0 Comments

-

TeeSpace - Print Custom T-shirt Designer WordPress theme

.thumb.jpg.dca0571de3cee236432ab9dec0fd1daf.jpg)

- 90 Downloads

- 0 Comments

-

GoldSmith - Jewelry Store WooCommerce Elementor Theme

- 10 Downloads

- 0 Comments

-

Merto - Multipurpose WooCommerce WordPress Theme

111.thumb.jpg.c969048bcbcc890a01428560846b5039.jpg)

- 69 Downloads

- 1 Comments

.thumb.png.d4b766ab4afed0e09ad4613cc557da43.png)

Join the conversation

You can post now and register later. If you have an account, sign in now to post with your account.